View Interpolation Along a Chain of Cameras

Free-viewpoint video strives for displaying videos in which the user can freely choose the camera position. The two main techniques use either a geometric model of the scene, or the intermediate views are synthesized directly from the input data (image-based rendering). Like most practical approaches, we use a mixture of both to reduce the number of views required for the view synthesis and still be robust to the problems of geometry reconstruction.

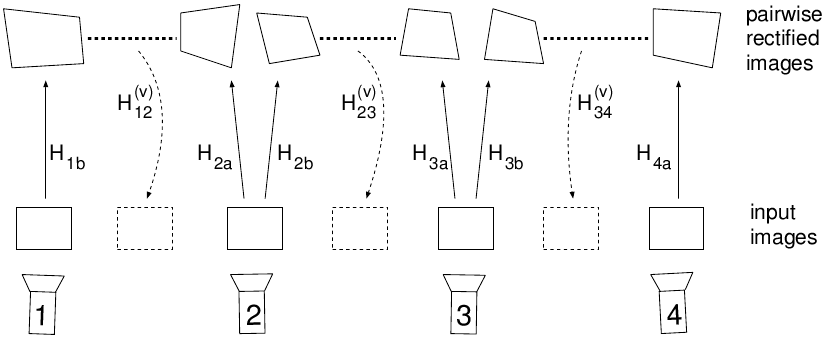

We present an algorithm for interpolating intermediate views along a chain of cameras. There is no restriction on the camera placement as long as the distance between successive cameras is not too large. The interpolated views lie on a virtual poly-line defined by the (ordered) set of cameras. Our algorithm requires no strong camera calibration as the necessary epipolar geometry is estimated from the input images itself. The algorithm first runs a preprocessing step to rectify the images to canonical epipolar geometry and to calculate disparity images. The actual view interpolation uses this data to synthesize intermediate views in real-time.

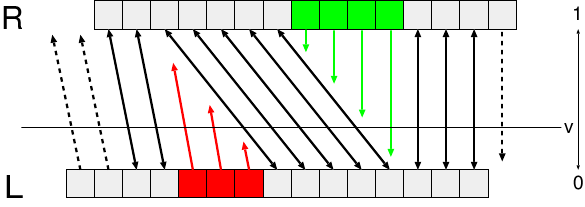

Our algorithm estimates the epipolar geometry between each pair of successive cameras and rectifies the input images to obtain horizontal epipolar lines (see Fig.). Disparity images are estimated for each pair of cameras and the intermediate views are interpolated in the rectified coordinate system. Prior to display, the interpolated views are projected back into the original, unrectified coordinate system.

The interpolated view is computed by weighting the two neighboring camera images. Since we use a depth-estimator that explicitly detects occlusion regions, we can switch to single-image interpolation in the occlusion regions, like indicated below.

Example Results

The demo shows the real-time interpolation through 4 images. The cameras were uncalibrated (rectification is done with the input images).

References

- Dirk Farin, Yannick Morvan, Peter H. N. de With: "View Interpolation Along a Chain of Weakly Calibrated Cameras" in IEEE Workshop on Content Generation and Coding for 3D-Television, vol. p. , June 2006, Eindhoven, Netherlands